Extreme Efficiency for

Every Generation

Custom hardware and a tightly integrated software stack that deliver faster inference and reduce costs by up to 90%.

Engineered for AI Inference

Custom AI-native servers running best-in-class GPUs with end-to-end optimized compute, storage, networking and cooling.

AI-Native Hardware Stack

Custom servers, storage, networking and cooling, built from the ground up for AI inference.

+100% Inference Throughput

GPUs run near 100% utilization, halving effective cost per generation.

Parallel Large-Model Inference

Shard large models across local GPUs for the lowest latency in the industry.

Any Model, No Rewrites

Run any open-source model natively, no porting or adaptation needed.

Low-Level Software Tuned

BIOS, kernel and OS tuned so more of your spend becomes actual compute.

Lowest Cost Per Generation

Dense pods and full GPU utilization deliver up to 10x lower generation costs.

Dominates Traditional Data Centers

Purpose-built infrastructure that outperforms generic cloud providers on every metric.

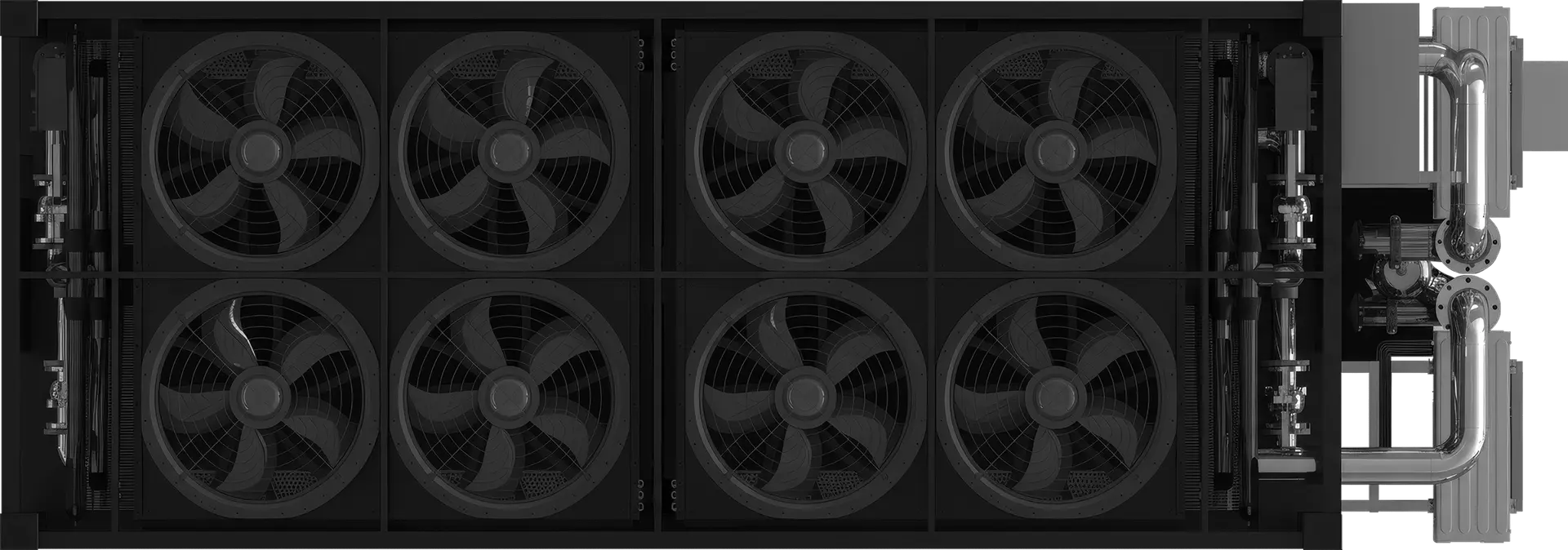

The System Powering Our Engine

Optimized for cost and performance, delivering the highest-density AI compute available.

High-Efficiency Water Cooling

Advanced cooling system for sustained peak performance.

High-Performance Networking

Custom ultra-low-latency PCIe networking with intelligent request routing.

Realtime Model Lake

Sub-second cold starts with enterprise-grade storage and instant availability.

Local Renewable Energy

Power sourced directly at the source for lowest cost and sustainability.

Built for Sustained Performance

Parallel GPUs, high-frequency CPUs, and software tuned for maximum throughput.

+2x AI Inference Throughput

Off-the-shelf GPU servers waste 40-60% throughput due to CPU frequency and memory bottlenecks. Our Inference Nodes sustain 100% GPU throughput across multiple GPUs, continuously.

Parallel Large Model Inference

Store nearly any large model locally and parallelize inference across multiple GPUs, delivering the lowest end-to-end inference time across models and modalities.

No Model Limitations

Run AI models natively on Inference Nodes with all supported capabilities. No customization or adaptation needed—our hardware runs any model that runs on a standard GPU server.

Low-Level Optimizations

We optimize everything from BIOS and kernel to OS distribution and configuration to maximize latency reduction and throughput. These optimizations amplify hardware gains by up to 100%.

Globally Distributed Infrastructure

A globally distributed inference platform built for low latency and unlimited scale.

Local Availability

Global points of presence with local Model Lakes for low latency and realtime cold starts.

Universal Compatibility

Any Pod can run any model, no restrictions on model or inference type.

Elastic Scaling

Capacity scaling via distributed GPU infrastructure, no limits.

Geo Proximity

Deployed near your users for the lowest possible latency.

Ready to Scale Your AI?

Experience the fastest inference at the lowest cost. Start building today.